That’s a mistake.

Qualitative research can increase the effectiveness of your UX experiments significantly.

Get it right, and you’ll get users to value faster; you’ll turn more customers into advocates; and you’ll save time and money on product development.

Qualitative research has been a huge part of my success as Head of Growth at Userpilot. In this blog I’m going to explain why, and how you can use it to supercharge results in your business.

Feel free to jump ahead using the links below, or read on…

Why Do Qualitative Research? Here are Five Reasons

#1 You lack the numbers for quantitative research

To derive statistically significant results from quantitative data, you need large numbers of users.

I agree with Andrew Capland of PostScript: in this interview I did with him, he said that unless you have more than 1,000 users a month to work with, it will take so long to generate valid results that you might as well rely on educated guesses in designing UX experiments (21:30).

#2 You are looking for unknown user problems

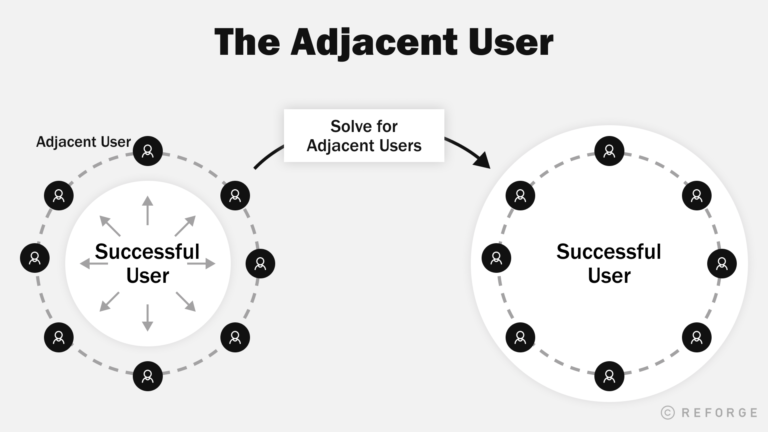

Your audience is likely always changing. As your product grows, your user base will expand into new segments. I like Bangaly Kaba’s term “adjacent users” for these people, who sit beside your well-understood core audience.

Questions of “how much” and “how many” can’t tell you those things.

And if you can’t map these new audiences’ needs and expectations, you can’t solve for them.

#3 You have little prior knowledge of an area

Going back to Andrew Capland again, qualitative user testing and research is vital for establishing Product Market Fit (20: 51) – that is, finding out whether what you have is useful/valuable at all.

Going quantitative at this stage is really missing the point.

#4 Qualitative research can be more persuasive

Sometimes the bare figures leave people cold – even when they’re mathematically very compelling.

Qualitative research can place data into the context of human lives and user stories in a way that can be really important for getting stakeholder buy-in.

#5 You want to improve UX through several quick iterations

Qualitative research fits better with an iterative development cycle for all the reasons I mentioned earlier:

- You don’t need many returns before patterns begin to emerge

- It can uncover and explain unexpected needs and problems

- It can build up a real picture of your SaaS’s place in users’ lives

Ask Your Users

There are two fundamental approaches to doing qualitative user research:

- You can ask users what they think

- You can observe what users do

There are four types of research I recommend under the “ask” heading:

In this section, I’ll spell out what I’ve learned about getting the best out of these techniques.

Formal Interviews

A mistake I used to make in interviewing users was to go in armed with a list of questions, thinking I needed to get through all of them.

But as I explained on this podcast (21.47), the real value comes from the opportunity the interview format gives you to dig deeper and to keep asking “why?”.

So if you only get through a few of your planned questions because you’ve gone off on some fascinating deep-dive, that’s ok. Remember, the goal is understanding the user.

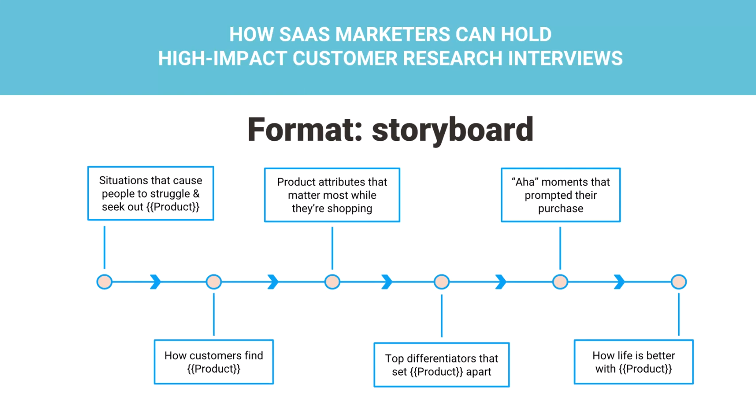

Claire Suellentrop has a great suggestion for coming at user interviews from a different direction instead of the traditional question list. In this presentation, she talks about a storyboard.

She adopts a Jobs To Be Done framework to focus on the “struggling moment” users find themselves in and the “better life” they picture beyond that (10:14).

The storyboard helps you construct a picture of the user’s mental state/model as they progress through your user journey.

This also has the great benefit of helping keep you focused on what users actually do as opposed to what they say.

People often make decisions pre-rationally or emotionally and then come up with reasons afterwards to justify why they did what they did.

So questions of “why did you do this?” and “how do you feel about that?” can give you misleading answers.

Similarly, interviewees can often be led into telling you what you want to hear. Questions like “do you think such and such?” are leading – the interviewer is putting an idea into the interviewee’s head.

As Claire says in the same presentation (15:47) asking “what” questions will keep users focused on what they actually did rather than how they interpreted it afterwards.

For example:

- What was it like?

- What can you do now that you couldn’t do before?

- What stopped you from using this feature?

Informal Interviews

I am a huge fan of this approach, although it’s neglected compared to the others.

First time, this approach was shared by Malte Scholz from Airfocus, a product management software, which specializes in helping PMs do better product prioritization.

He said, “During the informal interviews, a customer or future prospect feels more comfortable. They don’t have their guard on. It’s when they casually drop knowledge bombs without realizing that they’re giving real-actionable insights.”

Whenever I want to get user feedback, I start by finding where my users hang out.

That is, where they go to find out information and to talk to each other.

And when I get there, I just join in the conversation and learn.

This is how I made the decision to pivot Userpilot’s growth strategy away from advertising and cold outreach towards SEO, for example.

When I got involved in Product Manager communities, it became clear to me that:

- PMs don’t wait for or respond to approaches – when they want a solution or some information, they go out and find it themselves

- They’re used to searching for answers (often on technical sites like Stack Overflow and GitHub, but also Slack channels, Medium etc)

- They like to get feedback on their products – even when that’s unsolicited

So I would start conversations by offering my opinions on their products, and then just say “let’s see what we can learn from one another” – picking up information about how my audience think, work and live from there.

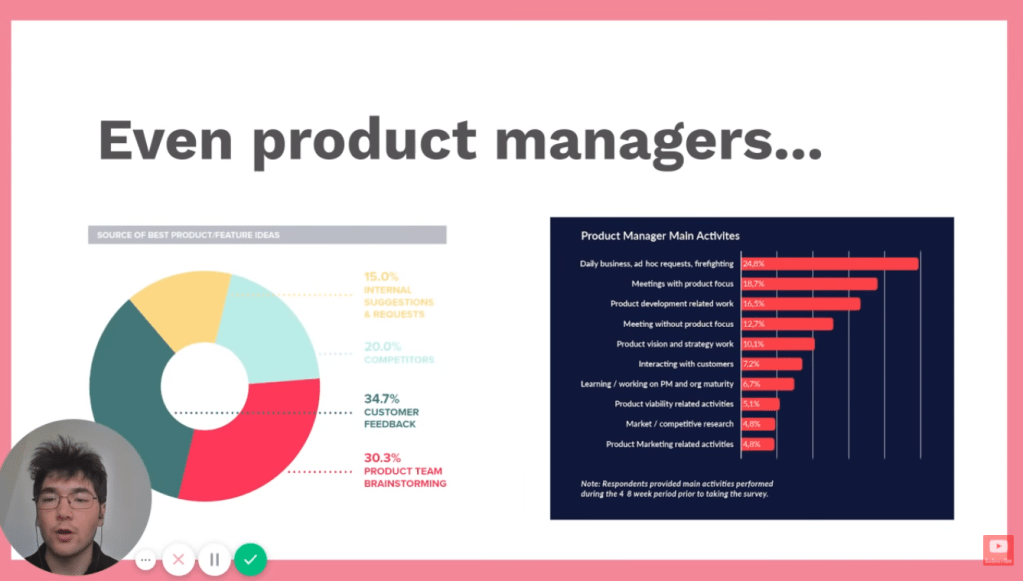

It’s strange: as Valentin Huang of Harvestr points out in this video (2:10), Product Managers cite customer feedback as the top source of new feature ideas (34%) and yet they spend a mere 7.2% of their time interacting with them. What a missed opportunity!

A great tip is to build this kind of informal, ongoing relationship with your power users.

You can just be chatting and picking up vital data and insights at every interaction point.

It’s more open than formal interviews, it’s less time-consuming and it really helps you to immerse yourself in your users’ perspectives and Jobs To Be Done.

You can extract insight from any kind of interaction. They don’t have to be live and they don’t have to be with you.

Chat logs, support tickets, reviews – all these types of unstructured user feedback are legitimate sources of qualitative research.

Surveys

Surveys are one type of research tool that nicely illustrate how blurry the line between “qualitative” and “quantitative” really is.

If you ask 1000 users “do you prefer this or that?”, surely it’s a quantitative study!

The difference really lies in how structured or unstructured the questions and answers are.

- Structured surveys show you which of the things you have decided matter users prefer

- Unstructured surveys show you what users themselves think matter

If you want qualitative information – about observations, thoughts, feelings, needs etc – your surveys (and interviews, for that matter) need to ask Open Ended Questions.

Let users answer in their own words.

This reveals so much more than multiple choice of drop-down option lists, because:

- It shows the terms and language users are working with

- When multiple users say the same things in their own words, the correlation is much more powerful than when options are limited

- Conversely, differences will help you to segment users into separate groups

Microsurveys

There are a few problems with surveys that makes me less enthusiastic about them than some of the other methods here:

- You don’t really have the opportunity dig deeper with follow-up questions that interviews or informal conversations permit

- Response rates tend to be low – perhaps 10 to 30% for our sector, tending towards the lower end the less regular contact with users you have. That means you need to send a lot of surveys to get useful results

Microsurveys – super short, sometimes just single question surveys – are a different matter though.

When you have only one question to ask, it is possible to pose a follow up without getting into a mess of branching questions and answers.

You see this a lot with NPS surveys:

Aside of NPS microsurveys as shown above, you can dig deeper and ask “why?”. With a follow-up question, asking the user to explain the score they gave.

What is great about microsurveys is that you can build them into critical points in your UX, to get immediate, contextual, task-focused feedback from your users.

This allows you to get feedback on very specific aspects of your UX – particular features, help center content, support etc.

Many microsurvey tools will also allow you to segment which users get asked which questions and when.

That level of contextuality pays off handsomely in terms of response rates. It’s common to see in-app microsurveys getting response rates of 40 to 50%.

Watch Your Users

Like I said earlier, sometimes emailing and asking users doesn’t get to the heart of the matter.

Fortunately there are tools that let you observe exactly what they are doing to extract qualitative research insights.

I’m going to talk about three types:

Session Recordings

You guys here at Smartlook don’t need me to tell you how helpful session replays can be.

By watching exactly how users go about interacting with your product, you get an unmediated view of how all the elements of your UX fit together.

A lot of people don’t watch enough of these. Andrew Capland sets aside time every Friday to do it (18:00), but no doubt about it – it can be boring!

And so a lot of Product Managers miss out on valuable insights.

This is where clever qualitative research features come in.

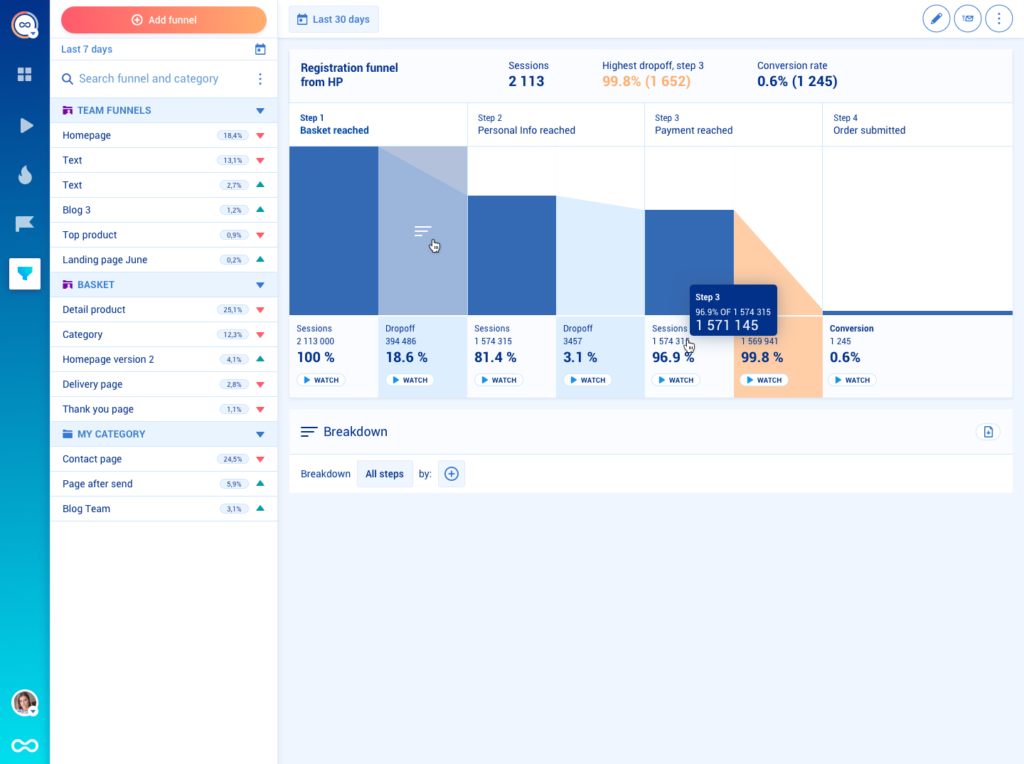

What is especially cool about Smartlook’s recorder is how you can create funnels using events to really dig into pain points in the user journey.

Using features like this, you can compile multiple users’ stories into more quantitative pictures of problems that keep cropping up. Once you can see those UX glitches, you can start to fix them.

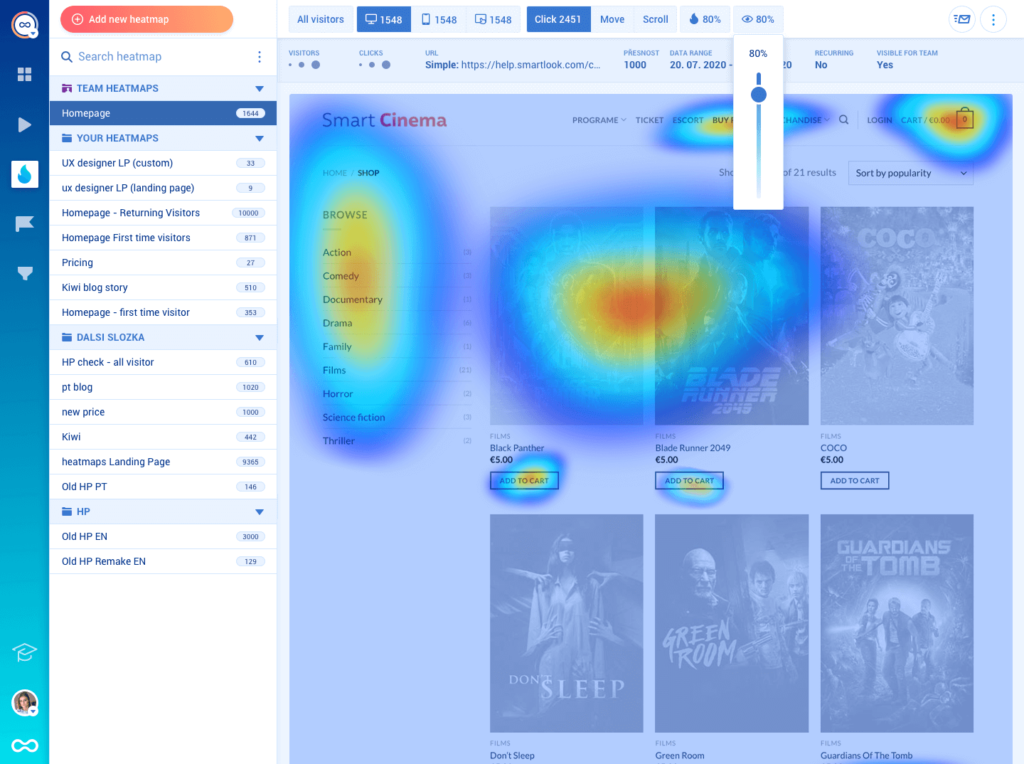

Heatmaps

That leads us on nicely to heatmaps.

Heatmaps are aggregations of where users are clicking within your app or, where they are looking.

Again, this can help you to unearth issues that users perhaps can’t even put into words.

For example, you’ll be able to see how many people are not scrolling down the navigation bar to discover an under-used feature.

Would it get more attention if it was above the fold?

Heatmaps are especially valuable for identifying design issues like this.

When you find one, why not install a contextual tooltip to draw people’s attention to the solution? Or create a triggered microsurvey to find out how they feel about the situation they find themselves in?

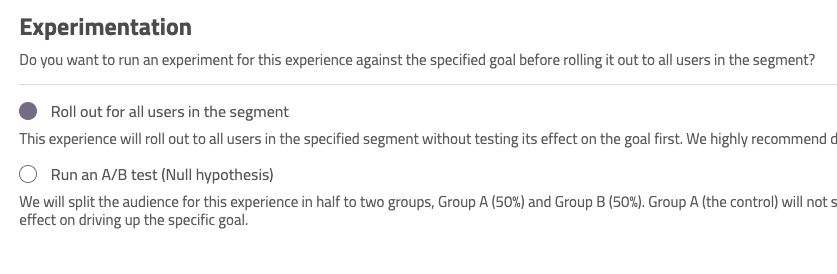

A/B Testing

Heatmaps and aggregated screen recordings come into their own when you combine them with A/B tests.

A/B tests are typically a quantitative research tool – they literally show “how many” users prefer option A to option B under certain conditions.

But these other tools allow you to dig in to WHY users are favoring one option over another.

I’ve written a lot about product experiments and AB/tests before.

They are vital to improving UX, because they let you put the insights and qualitative research you’ve collected from some of the other methods we’ve talked about into action.

Talking to users is great for finding out what they want to see and what problems they are encountering, but an A/B test lets you put a potential solution there in front of them and see what they make of it.

If the user behavior you see from the test doesn’t fit with the feedback – well, maybe you need to find another solution to that problem!

Turn Data to Insights

A big challenge many people find with qualitative research is turning a mass of feedback into actionable insights.

So I want to end with my five top tips to help you complete this critical step:

#1 Record and transcribe everything

Never take notes during interviews! When you do this, you are editing and selecting. Qualitative research is always a co-creation process between interviewer and interviewee, but you have to take precautions to minimize the slant you put on what’s said yourself.

Besides, the precise words your users use are really important – as we’ve already said.

#2 Group responses by simple themes

Once you have all your transcribed interviews and survey returns, collate the data in a spreadsheet.

There are research tools that can help you do this, but I find Google Sheets is good enough 99% of the time.

Then study those returns for patterns – simple one or two word themes, that keep recurring.

Once you’ve done that, you can further segment the data – cross referencing the answers of people who mentioned each theme against one another.

#3 Be specific

The narrower the scope of your questions, the more concentrated the answers you get will be. This can really help make qualitative research more solid and focused on solvable problems.

Once your qualitative UX research methods have proven themselves with a quick, narrow win, you’ll be in a better position to get buy-in for more wide ranging studies!

#4 Enrich your data wherever you can

I’m not just talking about services like Clearbit that scour the internet to flesh out your contacts’ profiles here (although this can be really helpful).

I’m talking about collecting data during every interaction with your users to find out more and more about how they live and work.

“How many other people from your organisation use this tool as well?” is a great question that can completely change the context of how you see a user, for example.

#5 Get more than one pair of eyes on the data

We will always have a bias.

Your interpretation of your data may not be the only one. Get someone else to go over your findings and validate what you think they show before you act on it.